The Cognitive Telescope

What the Flynn Effect Teaches Us About AI

In the movie “Idiocracy,” we meet Joe Bauers, an army librarian with an average IQ, who wakes up 500 years in the future to find himself the smartest man in a world that has, supposedly, gotten a lot dumber. It’s a funny, exaggerated story, but it’s a great thought experiment. But it might actually be coming true. According to studies, it might actually be happening. First, IQ was going up for a while, and now it might be going down. But the real story is likely much deeper. It may be a story of human adaptation.

For most of the twentieth century, IQ scores were climbing steadily upward—a phenomenon psychologists call the Flynn Effect, named after researcher James Flynn. It wasn’t that humans were evolving bigger brains or better genes. Instead, the world was changing around us. Industrial environments demanded new kinds of thinking: visual-spatial reasoning, abstract problem-solving, the ability to work with unfamiliar symbols and systems. Our minds adapted.

Think of it this way: we humans don’t just float around as isolated minds. We live in a cognitive ecosystem. And that ecosystem changes with the tools we use, the technology we interact with, and the opportunities we have. The Flynn Effect reveals an essential quality of humans: intelligence isn’t fixed; it’s a dance between capacity, adaptability, and environmental demands.

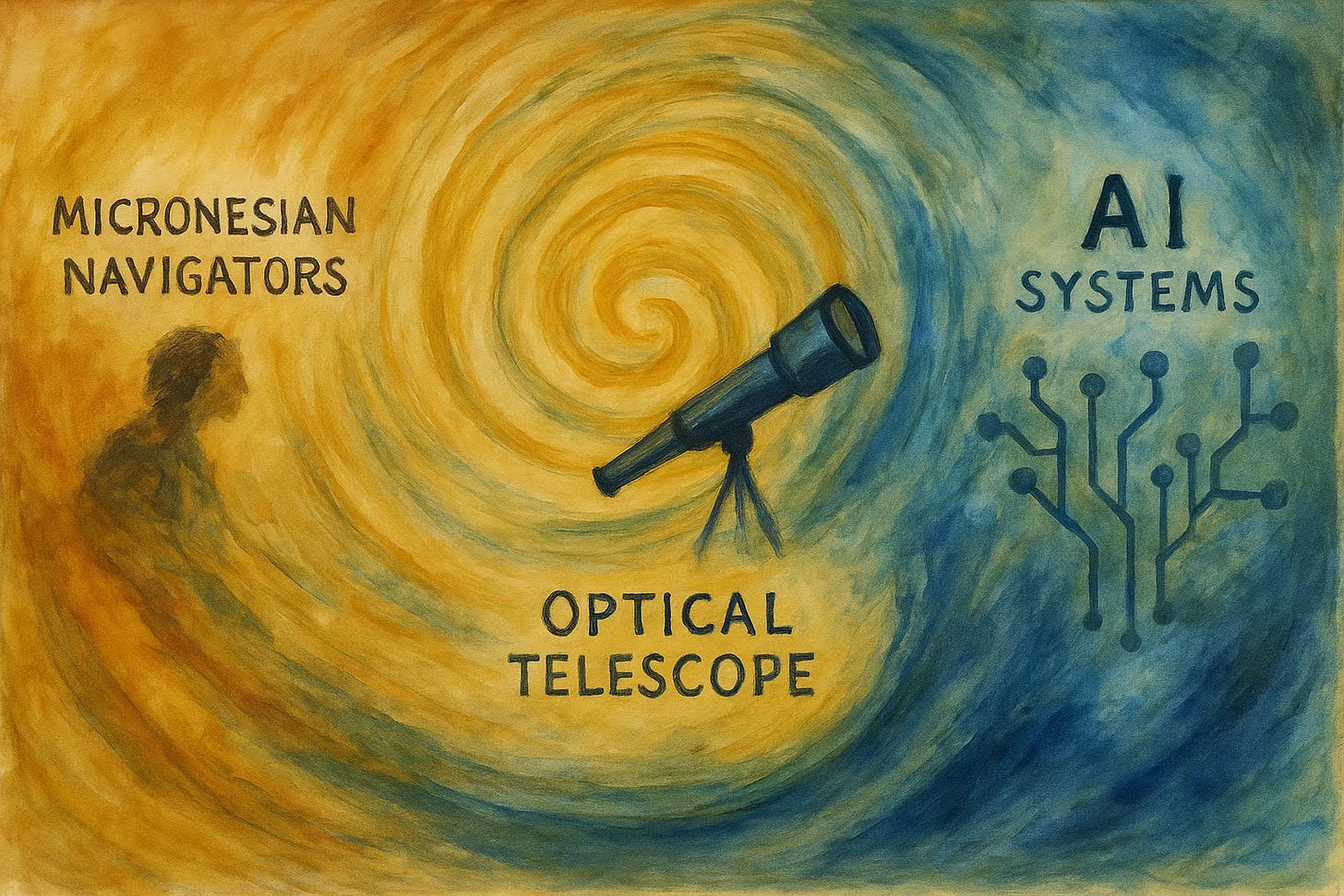

Consider the Micronesian navigators of the Pacific. For centuries, they crossed thousands of miles of open ocean using nothing but their eyes, their spatial reasoning, and their ability to read patterns in waves, winds, and stars. Their navigation abilities are proof of our extraordinary cognitive abilities—the same visual processing, pattern recognition, and spatial intelligence we have today. They just applied these capacities to environmental challenges of their cognitive ecosystem, one that our modern world might not have valued. Looking up at the night sky, they saw a navigation system, a celestial map that could guide them across vast distances.

Then came the telescope. It didn’t give humans new eyes or change our visual cortex. Instead, it extended our existing visual capacities, letting us see further and clearer than ever before. The very stars that Micronesian navigators used for navigation across the seas revealed themselves to be something far more extraordinary: other worlds, distant solar systems, entire galaxies. The telescope didn’t transform human vision. A constructed tube of metal and glass, just amplified it, allowing us to perceive patterns and structures that had always been there but were previously invisible.

Today, we might be witnessing another such moment. AI isn’t transforming human intelligence—it’s extending it, like a cognitive telescope. Just as the telescope amplified our visual reach without changing our eyes, AI amplifies our pattern recognition, memory, and reasoning without altering our fundamental cognitive architecture. But just as telescopes only work when you already have functioning vision, cognitive telescopes only work when we’ve built foundational human capacities through play, physical interaction, and the essential learning that remains uniquely human.

Take the 2024 Nobel Prize in Medicine, awarded partly for AI models that solved protein folding problems that had puzzled scientists for decades. The researchers weren’t replaced by AI—they used it to extend their existing abilities to recognize patterns and test hypotheses at scales previously impossible. They were doing what humans have always done: making meaning from complexity. AI just gave them a more powerful cognitive lens into a world where proteins could be folded over and over again, until the system evolved across several iterations. Eventually, computational biologists and lab scientists joined Demis Hassabis and John Jumper of Google Mind. Together, they used this powerful telescope to peer into the microscopic world spread across an extended laboratory that could never be built on planet Earth. They were able to see connections that were always there but hidden under the surface of biological complexity.

Now, as our cognitive ecosystem shifts again—with AI, digital interaction, and new forms of information processing—it might look like traditional IQ measures are dropping. But maybe those tests are simply measuring yesterday’s cognitive environment, just as they might have missed the extraordinary spatial intelligence of Micronesian navigators because their skills didn’t fit industrial demands.

The question isn’t whether AI is making us smarter or dumber. It’s how we’re learning to extend our existing cognitive capacities through new tools. From navigators reading stars with naked eyes, to astronomers using telescopes to explore the cosmos, to scientists using AI to decode the mysteries of proteins—we may be witnessing the same human intelligence expressing itself through increasingly powerful instruments. Each tool teaches us to see patterns we never knew were there, while keeping our essential human capacities at the center of the discovery.

Humans are neither less intelligent nor more intelligent because of our environment. We remain the same adaptable and creative creatures we have always been. Armed with these new cognitive telescopes, IQ may one day be seen as a quaint measure that captured a small snapshot of human potential.

Great point from @samhardy700295!

I like this, because it aligns with everything I’m reading about cognitive development, psychology, trauma and therapy.

In each environment we adapt using the tools we have to hand . . .